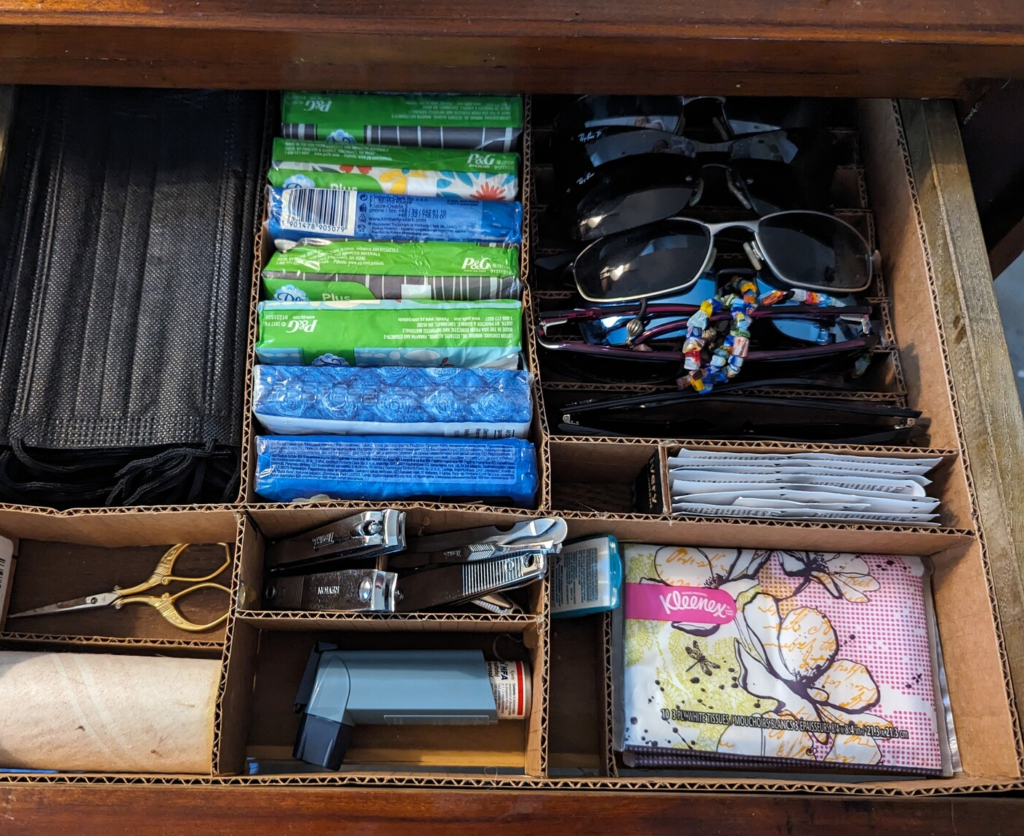

I don’t care what you use your bathroom for. This is what I use my bathroom for.

Somebody needs to think about this stuff...

by justin

I don’t care what you use your bathroom for. This is what I use my bathroom for.

by justin

by justin

by justin

by justin

by justin

by justin

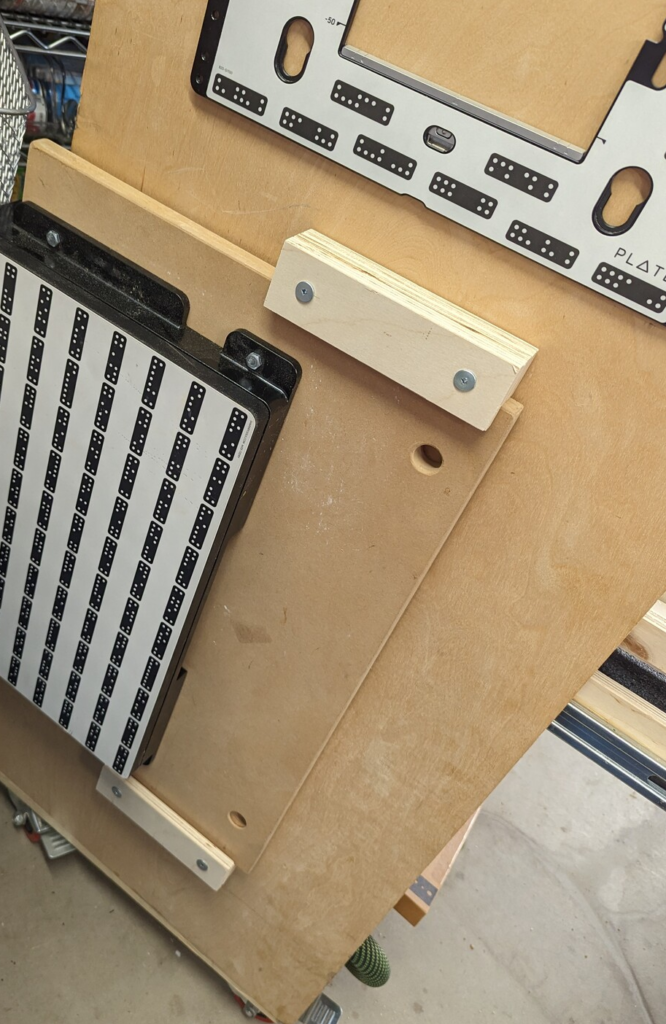

Wood glue does not stick to pre-finished plywood. I know this. I’ve known this for a long time. But did I remember this? No!

by justin

by justin

by justin

by justin

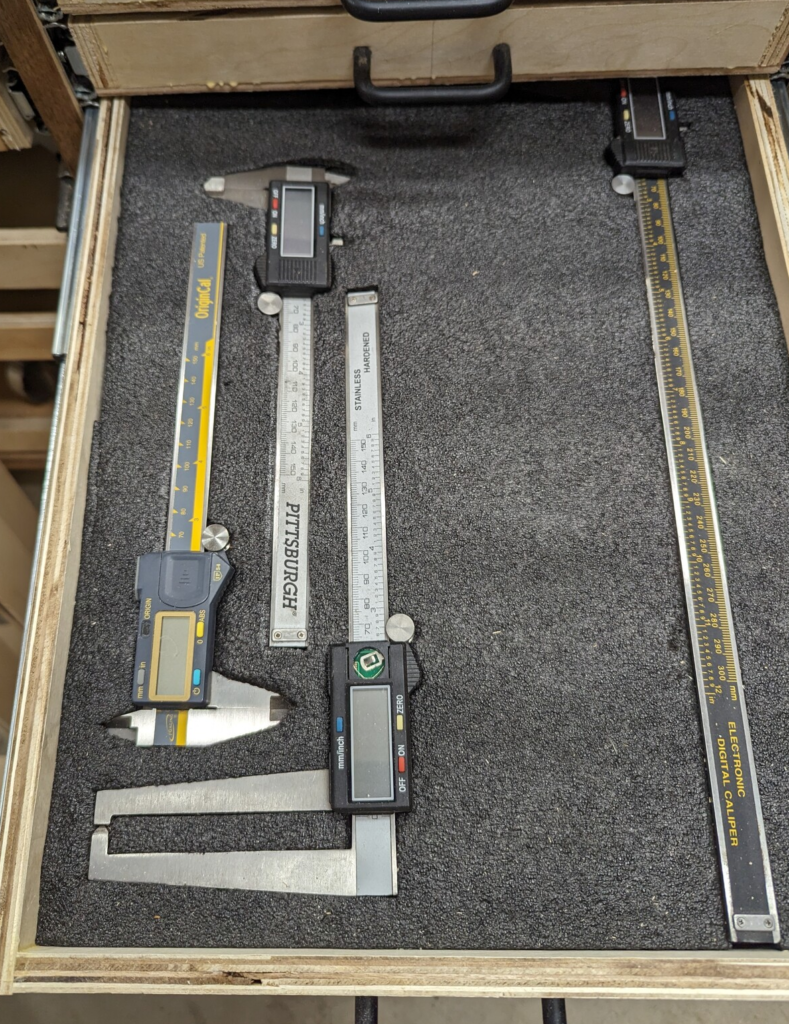

I am still figuring out how best to use Kaizen foam.

by justin

by justin

by justin

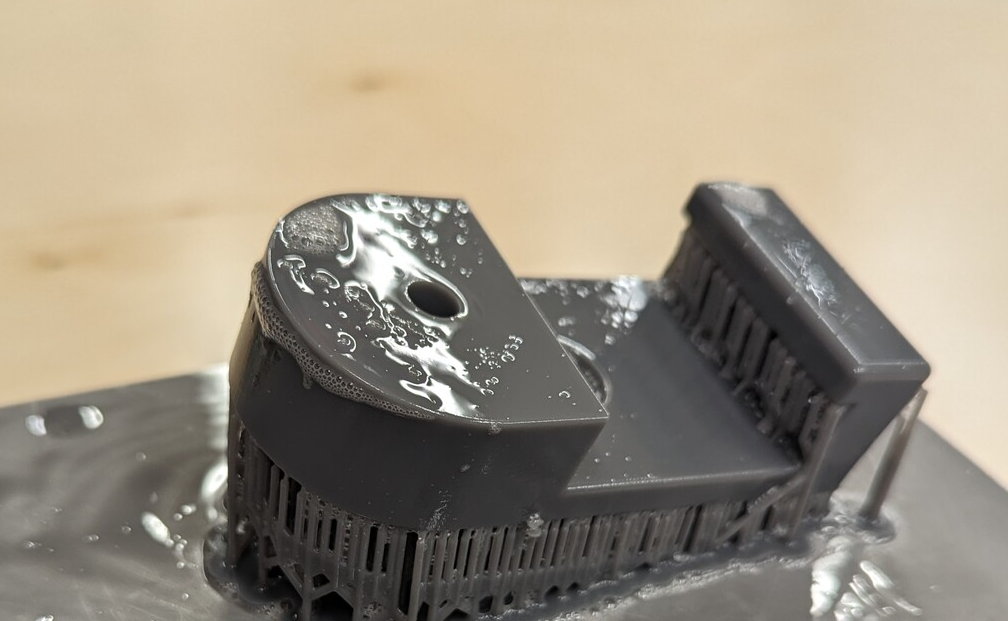

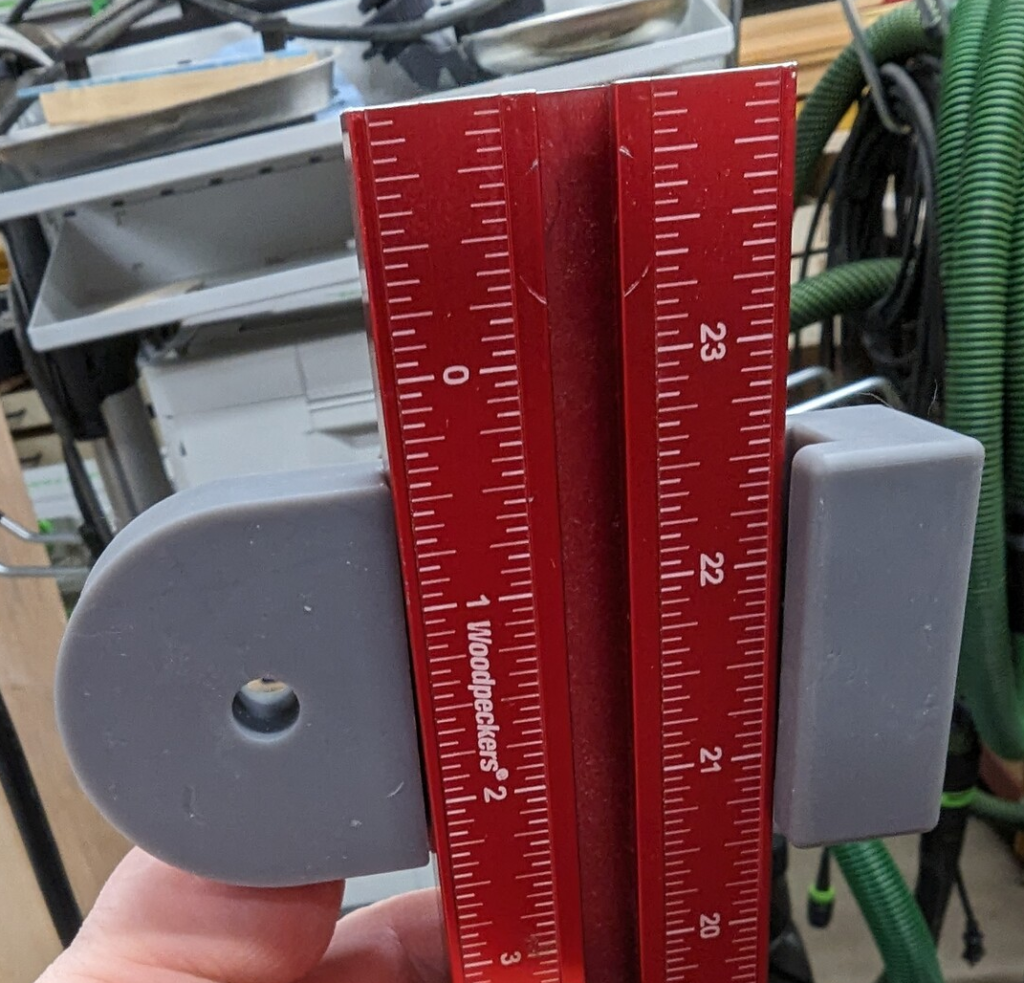

It’s a custom printed bracket…

To hold a story stick…

by justin

by justin

by justin

On the day I needed to cut down sheets of plywood, it decided to rain. The likelihood of these two events coinciding is not without note.

by justin

by justin

by justin

Experimenting with white lines. Cannot say I like the look.

by justin

by justin

by justin

by justin

Yes, it could work, but I need to remove the hose garage from the vacuum first.

by justin

by justin

by justin

by justin

by justin

by justin

by justin

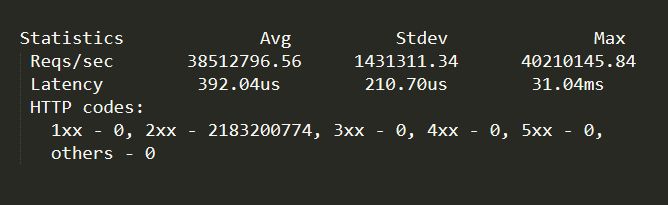

Holy crap that’s a lot of requests per second. 40 million per second, more than 2 billion in a minute, on the test hardware.

Have managed to make the back-end never hit the database for any reads except during start-up, and all database writes go through a WAL queue rather than directly to the database.

The requests are a mixture of request/response objects (which contain DTOs and collections of DTOs in the response) and media such as images and short videos. I don’t know how close we were to maxing out a dual 100gbit NIC — it is some exotic dual 100gbit NIC with four 25gbit ports on each NIC from my understanding, not sure on that as I haven’t physically seen the hardware with my own eyes. But there was still plenty of bandwidth to spare, and the quite a bit of CPU to spare too.

Now I will admit, these are synthetic tests, i.e. traffic replay of earlier live sessions, coming from another machine, without a bunch of network hardware in between, so I suspect real-world production usage will be considerably lower.

Configuration is:

MS SQL -> WAL cache -> Kestrel -> In-Memory Query & Object Cache -> NGINX -> Apache Traffic Server

Technically the Kestrel server application is doing its own in-memory/in-process object and object collection caching so that I am not dumbly relying on external caches to do the heavy lifting for me. The Kestrel server application is effectively just a cache filler once it gets going and has answered the initial burst of queries and built internal collections. And the only time Kestrel gets queried (after that initial burst) is if something hasn’t migrated to a more forward position (higher tier?) cache.

Update:

I have since moved from synthetic replay tests to production requests and our numbers ticked up a bit due to the way we shape our traffic so we’re just cresting 42M RPS, or around 2B+ per minute on a single machine.

I cannot go in to a lot of detail on implementation. But I am very happy with the throughput.

There’s still a little headroom, but I suspect I am going to be hitting limitations of the OS, network stack and drivers at that point.

I feed everything through eight separate 25Gbit connections, though tests have shown that I could get far better results by feeding through 32 x 10Gbit connections. Results better than “yeah, but 320Gbit is more than 200Gbit” would suggest. This would be due to packet offload, PCIe bandwidth, RAM <=> NIC DMA constraints, etc, etc.

The majestic monolith literally handles everything, from media to database to server. There are two other containers for logging and housekeeping.

I focused on what can I get out of the hardware. I optimized at each layer, which was the low-hanging fruit, then optimized the more esoteric parts, e.g. read-once/WAL database access pattern, automatically denormalizing data once it enters a caching layer, storing cached data in different data structures depending on its usage, deduplicating (but not normalizing) data where it made sense, updating in-memory cached objects on database writes, deferring all database writes to post-processed batches (housekeeping container), making the client smarter so that it didn’t ask for complex joins and other operations that couldn’t be cached, and so on. Not trusting the client, but assuming the client was smart.

There were a lot of bottlenecks that I was able to kick out by being given carte blanche to “fix it, I don’t care what it takes, make it so we can hit reddit numbers.” And then finally just tuning the hell out of the OS, the Mellanox cards, and so on.

by justin

by justin

Years ago, 45 years ago, I cut my teeth (programming teeth) on what are now very old microprocessors. The MOS (later Commodore) 6502 (not so obscure), Zilog Z80 (not so obscure), the Commodore 6501 (kind of obscure) and the MOS 7600 (not so obscure).

There was a question on Hacker News a while back about the 7600 and I was sure it was a CPU I had written code for. I was a little fuzzy on the details, recalling how the paddle inputs worked from a different game console.

Single channel sound, four outputs to control an RF modulator, four joystick inputs and a light gun input. Back then, CPUs weren’t exactly what you called sophisticated! No interrupts, no NMI, no external RAM, no real internal RAM, two X & Y registers, I don’t think we even had indirect addressing, it was uphill, both ways, in the snow.

My memory is a little hazy, but I think the 7600 was legitimately the second CPU I learned to write code for, and probably the first CPU I got paid to write for. I recall it came in a few different editions, with hard-coded programs in an internal ROM and this is where my memory gets really bad because I am not sure if I am confusing the 7600 and another CPU, but one of those CPUs had this weird piggy back socket on it to which you could attach an EEPROM, or an ICE, and it would let you run your own code compared to what was built-in to the masked ROM on the microprocessor. There was also a machine that had the EEPROM window that you could wipe with a strong ultra-violet light, and again, I don’t recall if that was the 7600 or another CPU. I don’t recall if the ROM on the microprocessor of this special edition had anything in it, or it was the standard edition mask with a special package. I seem to recall the different 7600 models had different graphics built-in, and you picked the variant based on what game you were trying to create.

Being a pack rat, and prone to stashing away any useful paperwork that isn’t nailed down, I was digging around for some other reason at our storage locker and in one of my several boxes of memories located old programming books and datasheets I haven’t looked at in decades, and I located an article with schematics for building a game console around the 7600 Video Game Array microprocessor, a service manual for repairing the consoles, a few schematics from old game consoles that used the 7600 and a datasheet with pin outs.

The reason I got the job of working with the 7600, at such a tender young age, was because I worked for cheap, easily exploited in my youthful naivety, and had been hardware hacking on broken 1970’s games consoles of various stripes for about four or five years by that point. That summer holiday job in the middle of the school year lasted all of three months before my Dad said to the proprietor “You have to pay my son if you want to him to continue working for you.”

I never did get my money.

by justin

“So how tightly coupled is the code?” asked my colleague.

“Our architecture diagram is used as the illustration on page 73 of the Kama Sutra.” I replied.

“Where should we be?”

“Brexit.”

by justin

Goodbye Teithe Din Din / Teith Yr Ewin / Teith yr 拧紧

Always in our hearts.

Teithe Din Din was a poor translation of “Journey of the Nail” or “Journey of the Screw.” It is a combination of Welsh and Chinese. The dog came all the way from Taiwan as a rescue, with a pin in her leg, so her foster family in the US named her “拧紧” which, to a Western ear sounds like “Ding ding” so she became “Ding ding”.

When we adopted her on April 16th 2011, Joyce and I pondered for a while and decided on “Taith Yr Ding Ding” as her name. But when you talk to a vet, they don’t quite get the nuance in the pronunciation, so on the veterinary records she was “Teithe Din DIn” and that just kind of stuck after a while.

When she arrived she was thin and scrawny, having spent almost six months in a tiny, cramped cage – she was our “Size 0 Super Model.”

She ate fried rice, so she was our “Number 37 Takeout Special with Fried Rice.”

She was Joyce’s heart dog, and I got to borrow her on occasion when Joyce wasn’t around.

And then 11 years later Din Din got cancer.

And then she was gone.

And I miss you.

And it hurts.

by justin

I would say that I spent my quiet weekend casually pulling UTP Cat6 network cables through walls and floor cavities in the condo so I now have Ubiquiti Unifi WiFi Access Points on all four floors and excellent coverage. Loving the built-in PoE of the Unifi Dream Machine Special Edition by the way.

But what I really did this weekend was spend most of it carefully sliding storage organizers of electronics down the hallway, and moving towering shelves of books twenty-four inches to the right‡, shooing my wife away when she noticed the layers of dust on the shelves and books “NOW you need to clean, sort, organize and dust?!?”, fighting cats that wanted to play with the string that I was using to pull some cables “What the hell is it snagged on now?!?!” and swearing at the fish tape that decided it wanted to visit Never Never Land by turning left at the third joist on the right rather than just making a bee line for the precisely drilled hole in the decking. There was also some strenuous debating of whether I wanted to go back up five flights of stairs (for the umpteenth time) to retrieve the flat spade soldering tip instead of the needle nose tip I had in the iron in my hand just to re-solder the network cable splice. “Do I really need to use shrink wrap on this or will gaff tape work? Hmm. Five flights of stairs…. gaff tape it is then!”

There is now a distinct lack of Cat6 running down the stairwell and certainly less of a tripping hazard just so we can have low-latency Diablo 3 gaming sessions.

‡ Except you cannot actually shift an entire 400lb book shelf in one go without doing yourself an injury so it was more like moving three or four books at a time.

by justin

A few days ago I installed a new Unifi Dream Machine Special Edition, and hooked up some Unifi WiFi access points to it. I took the plunge and also ordered a Unifi G4 Doorbell Pro for replacement of our current “dumb” doorbell, along with a HDD to store the recordings on. The Unifi Dream Machine Special Edition has Unifi Protect (their security camera software) built-in, so I am going to migrate my security cameras off of the Synology. Yesterday the HDD arrived so I installed that 22TB Western Digital Purple HDD. Quite the capacious hard drive. Waiting for the doorbell camera to arrive, which should be some time today. I was wondering how to go about having a video screen in the home to keep tabs on the front door events, and Ubiquiti has this product line called “Unifi Connect” that is in Early Access, that are essentially touch screens specifically tailored for this very use case.

by justin

Installed a fancy Ubiquiti Unifi Dream Machine Special Edition (it’s a network router) into my home network on Friday. I like it. I’ve liked the Unifi line of products for a long time. Ran some of their earlier h/w (switches, gateway, etc) along with a gateway controller inside of a Docker container to manage a few Unifi PoE APs. The h/w & s/w has been quite robust through the years. That kind of “set it & forget it” reliability I want in my infrastructure and tools.

I held off from acquiring the UDM (Unifi Dream Machine) because I didn’t like the requirement of a cloud account, either to use it in an ongoing basis (since removed) or to perform initial configuration (since removed) or to perform initial setup with a dedicated mobile app (since removed) or to require an internet connection for setup (since removed). Plug the UDM into power, plug my laptop into the UDM via a network cable, open a browser and… go!

Manually copy over some configuration about IPs, DNS and firewall port forwarding rules from an ASUS router to the UDM and it just worked so smooth. Nice and easy.

I am happy to opt-in to voluntary share statistics and analytics of how I use the h/w & s/w with Ubiquiti, but I don’t want to be coerced into “cloud” anything or mobile app requirements to use a tool.

by justin

As my thoughts turn to the holiday season in the US and I contemplate the vast gulf across the Atlantic ocean from where I grew up to where I now live I start wistfully thinking of home, and then reality has a way of bringing me back down to Earth.

I’ve always had a family that has always been very open about discussing money, mostly because we never had any for the longest time and also because the first question many of them always ask is “how much you got on you?” which is a lead-in to a life crisis story from them.

Picture a scene of a traditional, large family, holiday gathering straight out of a Saturday Evening Post Normal Rockwell painting but several people are commenting about the food on your plate because they are secretly sizing up how much leftover food they can abscond with if they discourage you from eating.

Me: “No. No more financial emergencies. No more cash outlay. No more ‘I do not have insurance and it caught fire’ problems. No more ‘I just gotta have this and it is on sale.’ Everyone has tapped in to Lloyd’s bank this year, and the bank is now having cash flow issues, we are closed for business for the foreseeable future.”

The umpteenth family member/cousin/distant relation at the family gathering, half-jokingly saying: “I guess I will take my business elsewhere!”

Me (under my breath): “Oh noes, what ever will I do giving unsecured, interest free loans to people who have no intention of ever repaying me.”

And so, in this season, I give thanks to the family that shaped me into the adult I am, and those small inconveniences that we colloquially refer to as “the pond” (AKA the Atlantic Ocean for those who aren’t from the UK) and the fact that +8 GMT makes it really hard to reach out and call someone “to just catch up, see how you’re doing, and let me tell you about my broken down car that hardly runs” when it costs a dollar a minute to do so.

I’m only half-joking 😉

by justin

I have a little game on my personal C.V. website.

Had someone accuse me of stealing the entire game from someone else.

Then to prove this, they linked to the github repo, which was a fork of my own github repo.

And then after I pointed this out, they doubled-down and came back with the vague “well I’m sure you put something in there that isn’t originally yours.”

And then two days later came back with “So you’re ghosting me? Fine. I guess I won’t be moving you forward in the application process.”

Seriously dude, chill. I didn’t even apply for this position. You approached me, remember?

by justin

The past few weeks I’ve been wrangling some knotty algorithm that is to do with Elixhauser comorbidity calculations for the new 2022 rule set in the healthtech startup space. And the code I’ve written is very procedural, and hopefully easy to read, but also kind of like wading through thick mud in terms of understanding how values drop out of the bottom of the algorithm.

Let’s put it this way, this shit’s so arcane and weird that even the SAS code provided by the governing body that demonstrates the algorithm doesn’t adhere to the spec, and that point is even called out in the scant amount of documentation for the code. And let’s face it, SAS source code is bad enough as-is at the best of times, a 4GL language from the 1970’s that has grown warts in its old age, where any program written in SAS winds up looking like a 2,000 line DOS batch file, and I am thoroughly convinced that whoever wrote this particular chunk of SAS source code not only hates his job but also hates every person on this planet that doesn’t have to use SAS to get their job done.

Now once you explain the rules of how the Elixhauser algorithm works to someone, yourself having waded through three PDFs and the aforementioned SAS source code, the algorithm makes sense, in a roundabout fashion, but there’s still these “rules of exclusion” where “if the patient has this comorbidity, but it didn’t present on admittance, then this other rule applies instead.”

I think if I were implementing this multi-stage procedural algorithm all over again, I’d jettison all of my code that I’ve written over the past three weeks and implement the entire shebang as a filter trie, AKA a Bloom Filter Trie, about 25,000 inputs at the trie root, and about 7 outputs as the trie leafs.

I’ll say this, my years of studying the Dungeon Master’s Guide and Player’s Handbook across all the editions of D&D, looking for exploits and rules that could work in my favour, have gone some way to helping me decipher DRG (Diagnosis Related Groups) and Exlihauser algorithms over these past two months.

by justin

My wife is out of town for a couple of weeks. I have fallen back in to my usual habit of surviving on breakfast cereal. Anybody that knows me personally also knows this isn’t how I ordinarily live, what with the several years of culinary school and all.

I bought a fully cooked lasagne which sat in my fridge since Monday last, and me with the full intention of cooking and consuming it over the course of three days.

Well here we are, six days later, and that lasagne needs to be ate.

“Reheat at 325 for 20 minutes.|

Done!

“Serves four.”

Serves four, it says.

And I took that personally.

Can already feel the sodium level in my body spiking.

I regret nothing.

Except maybe for the fact I am out of antacids.

Cannot feel my fingers… but I can hear every bubble fizzing in my glass of sparkling water. Is that normal?

by justin

When engineers take up hairdressing…

Saves me well over $300 a month colouring and styling my wife’s hair by myself. Plus she gets the exact look she wants.

by justin

Picked up a couple of lav microphones with recorders (Zoom F2-BT with Rode mics) use for group vlogging. I reeeeaally wish Tascam would release an updated DR-10L but it is what it is. Went with Zoom because a) battery life and b) 32-bit recording.

I am liking the 32-bit float recording of the Zoom F2-BT. It is plug & play compared to my Sennheiser setup. Just click “record” and it goes, and I can fix any issues in post rather than worrying about clipping.

Bought two of the Zoom’s. Want four, but will hold off until I know whether Tascam is putting out a newer version of the DR-10L.

by justin

.;''-. The lessons I was taught about the things of my thoughts

.' | `._ is that if I write until I quit then my thinking becomes quiet.

/` ; `'. I write.

,'\| `| | I write to think.

| -'_ \ `'.__,J I write quite a lot.

| `"-.___ ,' I write almost as much as I've thought.

'-, / I write until I quit.

} __.--'L I write to get the thing out of my head.

; _,- _.-"`\ ___ Which let's my thinking go on to my next thought when I step out of bed.

`7-;" ' _,,--._ ,-'`__ `.

|/ ,'- .7'.-"--.7 | _.-' Because there's only so much room up there.

; ,' .' .' .-. \/ .' In that little cramped space inside of my hair.

\ | .' / | \_)- '/ _.-'``

_,.--../ .' \_) '`_ \'` Sometimes the thing I think about has taught me a lot about thought.

'`f-'``'.`\;;' ''` '-` | And sometimes the thought I have about the thing taught me nothing at all.

\`.__. ;;;, ) /

/ /<_;;;;' `-._ _,-' But I am grateful for the lesson it bought.

| '- /;;;;;, `t'` \ I don't think I could think without writing my thoughts down.

`'-'`_.|,';;;, '._/| Which gives me a new thought:

_.-' \ |;;;;; `-._/ Do I think to write?

/ `;\ |;;;, `" Or do I write to think?

.' `'`\;;, /

' ;;;'|

.--. ;.:`\ _.--,

| `'./;' _ '_.' |

\_ `"7f `) /

by justin

Oh just fuck off already!

by justin

Cute little GPIO buffer boards. So that when I start wiring up electronics components in a circuit to my tiny and overpriced and perpetually out-of-stock SBC (single board computer) that took forever to arrive, for a project I am working on, I don’t accidentally turn said single board computer in to a blue smoke generator because I wasn’t paying attention, or had a connection go awry. No matter how much I earn, I still feel stupid when I blow up a $40 part because I didn’t check my circuit properly, or had a dab of solder in the wrong place.

by justin

Me: “I need to transmit about 8K of data…”

The Helpful Plugin: “I think you mean 8 kilobits.”

Me: “I need to transmit a little over 8 kilobytes of data, which is around 8,000 bytes.”

The Helpful Plugin: “It’s 8,192 bytes actually.”

Me: “I’m going to use a for loop here.”

The Helpful Plugin: “You should use a for each loop.”

Me: “I’m going to use a for loop here that will iterate, not enumerate, from index 0 through 8,200…”

The Helpful Plugin: “That looks bigger than 8KB of data, also, zero is a hard-coded number, you should consider a named constant!”

Me: “As I iterate over the bytes, I will perform a Fast Fourier Transform on the data.”

The Helpful Plugin: “Have you considered parallelizing it to make it run faster?”

Me: “Over a very slow, serial connection that is prone to timing issues. But it appears that my math is off…”

The Helpful Plugin: …strangely silent when it comes to a real bug…

Me: “Ah, here is my math error, let me make a note in a git commit…”

The Helpful Plugin: “You should put a comma after the word ‘and’ to make it more grammatically correct.”

Me: “Shut. The. FUCK. Up!”

by justin

That is a freakin’ big UPS (uninterruptible power supply). At least, it is big for personal office needs. Weighs around 50lbs/25Kg. Li-Ion batteries. Should run my machine learning workstation just fine. Found an amazing deal on an APC Smart-UPS SMLTL1500RM3UC

by justin

I got sent a PDF that covers salary information for #softwaredevelopers and other #software related roles for a whole bunch of companies across the #uk, from Junior Developer through to #CTO. I can only describe the numbers as “stagnant.” The average Senior Software Developer #compensation in the survey hadn’t moved by more than 15% in 27 years. I can only conclude that the salary information was gathered across all of the UK, from a random two-person non-tech company in Nowheresglen, Scotland all the way through to Fortune 500 BigCorp in #London #FinTech, and then all those salaries were averaged.

Back in the 80’s the UK government was prescient in striving to put a computer in every classroom and almost every home. The initiative bred an entire generation of technology literate people and budding software developers. And looking at numbers of immigrants in the US and Canada (data available from various govt sources and websites) for the period 1990 through 2010, who have a country of origin of the UK and also list themselves as software developers, I would say a high percentage left for the US and Canada to get paid more. Talk about squandering opportunity.

The sad thing is, I don’t think that UK companies have learned anything from that, and the best and brightest continue to be drained from the country.